Is New Wave Feminism Really Feminism?

I know what you're thinking... but just hear me out.

To begin, feminism is commonly interpreted as women being considered equal to men in the eyes of society. The question I am asking is isn't it more important for women to be equal amongst each other first? What I mean by that is women are constantly bringing each other down, judging, and being concerned with ego. For example, a woman posts a picture of herself in a swimsuit and she has stretch marks, for she is the mother of three beautiful children. This women then proceeds to receive hate comments, for in the 21st century it is apparently abnormal to have stretch marks, even after having children (unless you're Kylie Jenner). Does anybody acknowledge the fact that she is a mother and proud? Absolutely not. What matters today isn't what kind of person you are, your likes are based on how you look and even to some extent, your social standing based on the materialistic items one has.

To be clear, this isn't a hate rant, it's simply an observation. Think about it—who is most likely to receive millions of likes: a woman who has the average body of a mother of three, or someone who has their body paid for and shows off her bare ass on social media? Not that there is anything wrong with nudity or being confident in oneself, but at some point can we be realistic? No woman wants to admit it, but I'll be the one to, we bring each other down to make ourselves feel better about our insecurities. So in reality, every time a woman is posting a picture of her shaking her naked butt on Instagram, she is essentially asking for acceptance. Somebody's husband, boyfriend, baby daddy, is looking at those pictures while their woman sits at home feeling bad about themselves as they scroll through their significant other's recently liked photos. It seems as though we have been so focused on being able to have sex and show our bodies off, that in reality we've been taking steps back when it comes to feminism.

In reality, the only supporter a woman can truly have is another woman. Nobody understands the sexual harassment, the pressure of how to look, or the roller coaster of emotions we are subject to, except another woman. However, there seems to be a new-er wave of feminism taking hold of our society and it has to do with a complete absence of femininity. It is okay to flirt with a man in a relationship, because what affect does it have on you? It is okay to bash on a woman who wants to be a stay at home mom because CLEARLY the definition of feminism means to never have kids, get married, and only be career oriented. It is okay to make faces and show disgust towards a woman who breastfeeds in public because boobs are only used as an object for the male brain (ironic?). At this point in time, women should know they are, in fact, quite superior to men. We know how to take pain, put up with bullshit, and love better than our genetic counterpart. With that being said, continuing to be a sex object, whether that be a mistress, being an Insta-hoe, or having a sugar daddy, tears women apart and puts men above us again. In order to be a supporter of feminism, women have to be kept in mind, and that includes all kinds of women. From the prudes to the freaks.

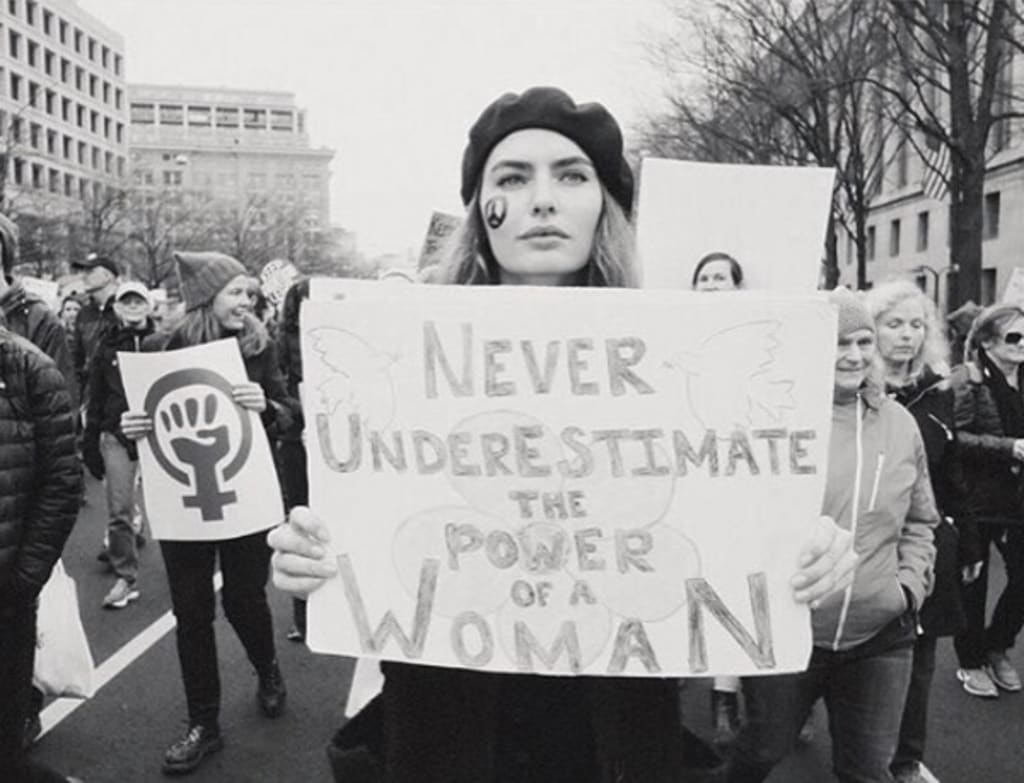

Moreover, I feel as though we need to take a long, hard look at what is happening right in front of us and realize that only a woman can truly be a feminist; and in order to do that, we need to come together.

Comments

There are no comments for this story

Be the first to respond and start the conversation.